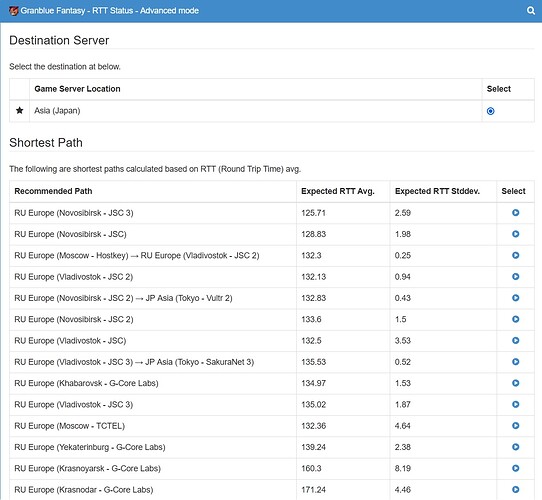

Hello, is there something wrong going on with the Russian nodes: specifically Novosibirsk 1-3 and Vladivostok ones lately? The connection seem to be unstable via browser extension. The launcher shows very high RTT lately sometimes too.

@Merem Sorry for this inconvenience. At least there’s no recent changes on these mudfish nodes. So I guess it might be a problem of upstream ISP if it’s still same.  Let me check this issue little bit more.

Let me check this issue little bit more.

Can you check what was going on around the time of this post 21.12.2021?

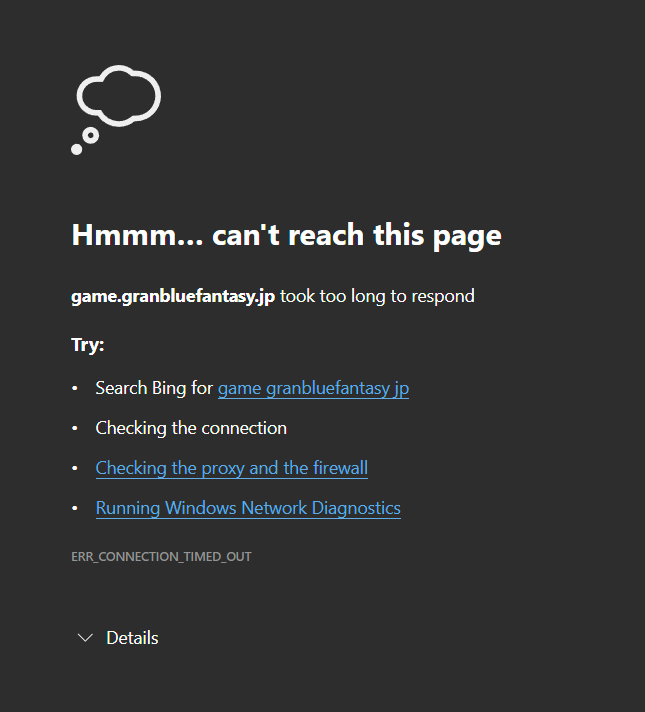

RU Europe (Moscow - AGPL) → RU Europe (Novosibirsk - JSC 3) - the connection seem to be fine, but the game doesn’t respond.

The ping looks fine, but the game server often doesn’t respond or respond with the network error message.

I was using the launcher this time.

@Merem It seems you’re using the advanced node mode. Did you try to test with the basic mode after setting “RU Europe (Novosibirsk - JSC 3)” only? Not sure that this issue is a problem of advanced node mode or a mudfish node itself.

I only used single node (Novosib 3 mainly) in the browser extension and its poor performance was the reason to try the launcher with advanced mode for me (which was more stable overall). I didn’t try to use basic mode with the launcher yet, but I can try.

Honestly, everything worked fine lately so I can’t say that the issue still persists.

I have the same problem with JP Asia (Hokkaido - SakuraNet 2) node and SG node (which I sadl forgot which one). Im on PC app btw not using chrome extension.

@kupodesu For your case, please check your ISP first. When I checked RTT information recorded in Mudfish, it seems RTT stddev values to most of the mudfish nodes are quite unstable. It means your ISP has a network issue. For details please check http://ko.loxch.com/userrtt/?uid=583541 link.

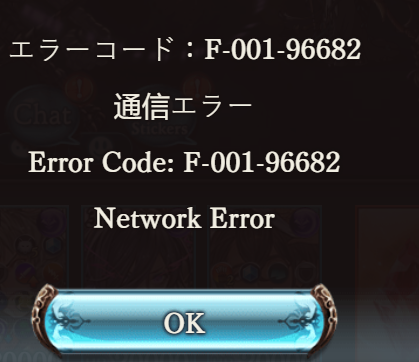

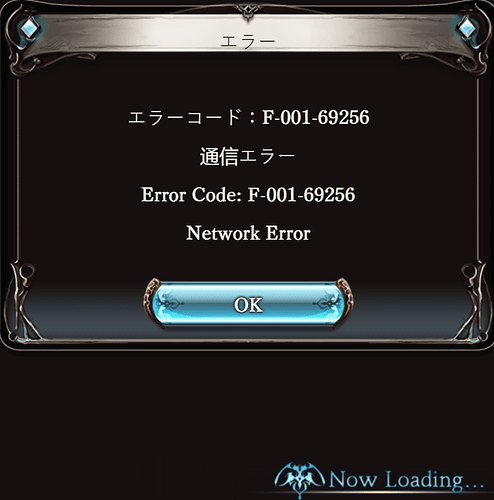

Network errors again. Started with just Novosibirsk JSC 3, tried to use RU Europe (Moscow - AGPL) → RU Europe (Novosibirsk - JSC 3) after that, same errors.

Dunno if it’s the node or my ISP.

@Merem Thank you for these screenshots. Is this issue still happening with this node? At this point, I don’t have enough information to know why. Might be a problem of mudfish node itself. When this issue happened, did you test with other mudfish nodes too?

Yea, changing the node helps most of the times. I think there was an outage in December when the majority of the nodes were not functioning properly for some time, but it was fixed.

There are few other people not from my country who also use this node (because it’s the lowest ping for all of us) and we have issues with Novosibirsk 3 simultaneously, so it’s probably not ISP.

It doesn’t happen that often. This node is the fastest available for us, but it’s just not completely stable.

I see. However is there any reason you didn’t use RU Europe (Novosibirsk - JSC) or RU Europe (Novosibirsk - JSC 2)?

These two mudfish nodes are from same ISP and locates at the same network block.

Yea, JSC 1-2 are the first candidates to try if the 3rd one is bugging. But normally JSC 3 is the first in the list with the lowest average RTT.

Small bump, but are there any known issues with the Moscow - Tencent node? It’s the one that usually performs best for me, but I’ve been having a lot of issues with it lately.

Having the same issues as the OP with those specific nodes, as well as Moscow - Tencent (which is usually the best for me personally).

Tencent’s average ping doubled for me last week and has remained that way since.

Please try to test your network status from your desktop to 162.62.9.233 IP (RU Europe (Moscow - Tencent)) using How to use WinMTR link.

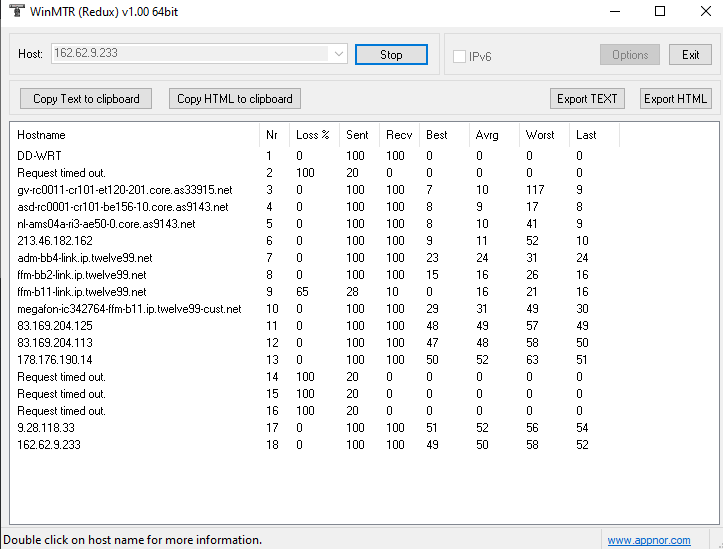

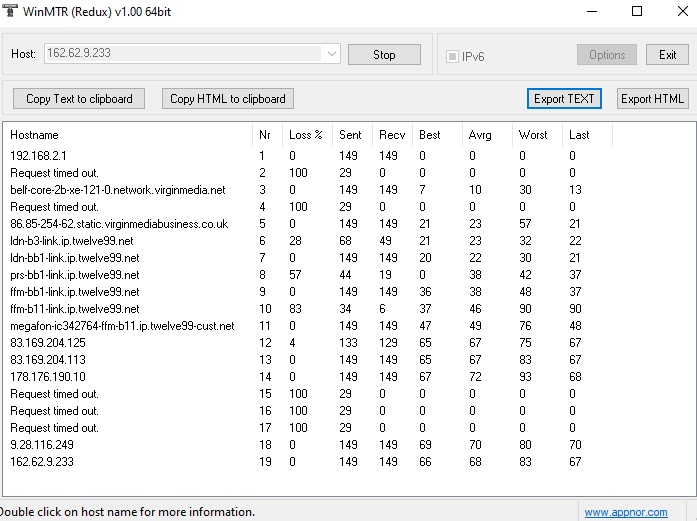

I think this result shows you where this issue is from.

It was fine for two days, back to being pretty terrible today. Not sure if it’s ISP or routing related, my ping checks to other IPs close to this region are all stable and normal.

Thank you for these screenshot. When I checked these screenshots, it seems the issue was beginning at Hop 6 (*.twelve99.net). So it looks like that your issue is happening at middle of the routing pathes.

To avoid this issue, I think you need to bypass these routes going through *.twelve99.net related hops.

I appreciate your reply. For future reference, can we do anything about that or is that not something we as users have control over? I ran the MTR again just now and I do not seem go through that hop anymore (and the node also works great now), but if this changes at any time, what can we do about it?